Minimum Viable Product

The term Minimum Viable Product (MVP) often refers to a version of a product with just enough functionality to be usable and of value to early customers while still being far from being complete. According to that definition, an MVP is already a running product. However, many people also consider MVPs as experiments in the sense of product discovery.

Confusing Concepts of MVPs

Before we discuss what an MVP is, let’s discuss what it isn’t. The following is an ill-famous illustration for explaining the idea of MVPs:

Would you travel long distances with the family on a skateboard? Or can you imagine Harley riders cruising the Alps on their e-scooters? Us neither…

MVPs as Experiments

Originally, however, Eric Ries defined MVPs differently in the context of Lean Startup:

The minimum viable product is that version of a new product which allows a team to collect the maximum amount of validated learning about customers with the least effort.

Eric Ries The Startup Way

To narrow down on that learning aspect, particularly learning about value for customers, a number of different MVP experiments can be performed. These are particularly useful for validating completely new business ideas but might not be directly applicable to features that extend an existing product:

Landing Pages

At a very early stage, a simple landing page might be sufficient to explain a new product and test its value proposition. Given the low effort, it is even possible to run different versions of a landing page to test multiple variants. When it attracts customers from the target group, it’s worth continuing. When there is little to no response, maybe it wasn’t such a good idea after all…

Explainer Videos

For a bit more complicated topics, simple landing pages might not be sufficient, and a more elaborate story is required to bring an idea across.

For example, before Dropbox was launched, CEO Drew Houston wanted to know whether people would actually be using such a tool for syncing files between devices. At that time, such a solution didn’t exist and people had a hard time imagining how this might even work — so it was impossible to interview them. Also, a real working prototype didn’t exist because that would have meant having solved all the hard topics already. Instead, he decided to put up a short video pitching the overall idea in just a few minutes — so essentially faking the complete product.

At the end of the video, Drew then explains that the product would still be in private beta and asks interested people to join the waiting list. Essentially overnight, the demo video was going viral and tens of thousands of people subscribed to the waiting list. That was enough proof of value.

Fake Door Test or 404

Fake door testing is another useful way to rapidly validate whether an idea that doesn’t exist yet is worth exploring. Essentially, the fake door is a button or link that promises the expected functionality but when users click, they will land on a page explaining that the feature isn’t ready yet, or they can register for a waiting list. By measuring how often users actually take that action, the demand can be assessed and the next steps decided.

Concierge Test

When you spend a couple of days in a great hotel and they have a concierge, then that person would not only welcome guests but also arrange additional services, book transportation, coordinate luggage and procure tickets to events. That’s the exact same idea behind a concierge test in Product Management: offer a service to customers, see if they find that service valuable, and deliver that promise manually, with as little technology as possible. Note that every such service usually has a price tag associated with it, so while it is a very time-consuming process, a concierge test provides valuable qualitative feedback on whether or not customers would find it useful enough to even pay for it. Also, based on the direct interaction with users, concierge tests help generate solution ideas.

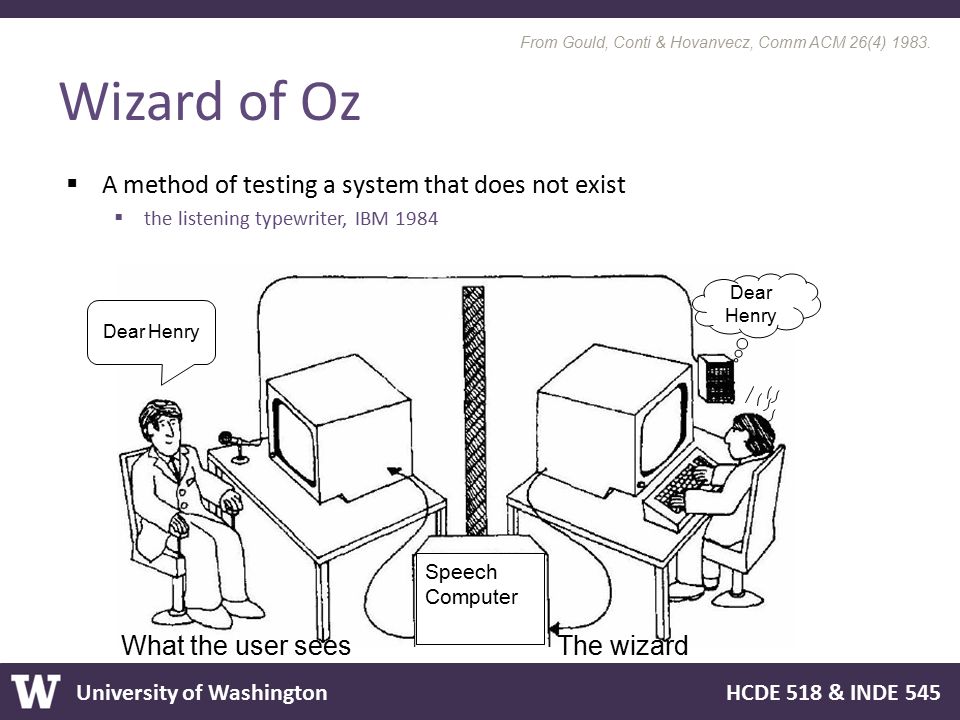

Wizard of Oz

While the interaction with a human is obvious for the user in a concierge test, this is not the case for Wizard of Oz experiments. Rather, here a solution is offered that, to the user, looks like interaction with a fully featured system while behind-the-scenes responses are being generated by humans. This way, Wizard of Oz helps to validate solution ideas.

Piecemeal

Further Reading

Debunking Common Misconceptions Around the MVP

Be clear whether it’s an experiment or version 1